Blog

Building trust in AI analytics: strategies for data teams

Your AI model crushed every metric in development, but nobody will act on it. Here's how data teams are closing the trust gap with strategies you can apply to your own warehouse, models, and stakeholders.

You've probably been there. Someone asks "What's driving churn this quarter?" and an agent in your analytics platform retrieves an answer straight from the warehouse. It comes back in seconds. And then comes the follow-up you knew was coming: "But how confident are we in this?"

It's a fair question. AI analytics can spot patterns you'd miss manually and cut weeks of work down to minutes. But speed means nothing if nobody trusts the output. According to Hex's State of Data Teams 2025 report, which surveyed over 2,000 data professionals, 77% are excited about AI, but only 3% said it was a main focus area. Most teams are stuck in the middle: they've deployed AI, their stakeholders are curious, but the organizational confidence to act on AI-generated insights isn't there yet. That gap is closing fast, and the teams pulling ahead are the ones treating trust as a design problem, not an afterthought.

Why AI analytics trust breaks down

Right now, three forces are working against you. Data quality remains the bottleneck nobody wants to talk about. In Hex's survey, 84% of data professionals rated it a top focus area, and when AI trains on messy data, stakeholders learn quickly not to trust the outputs. Then there's the black box problem: when stakeholders can't see why a model produced a particular answer, they stop using it. And ungoverned tools are spreading faster than organizations can respond. Business users aren't waiting for your data team to figure out governed AI access. They're already pasting questions into ChatGPT and other general-purpose tools to get answers that used to go through the analytics request queue. The tools themselves aren't dangerous, but answers generated without warehouse context, metric definitions, or governance guardrails can be wrong in ways that are hard to detect until a bad decision has already been made.

Everyone on a data team cares about reproducibility, lineage, and risk. These aren't concerns that map neatly to individual roles. But the trust gap hits hardest with business users and data leaders, because they're the ones being asked to act on outputs they can't fully verify. A data scientist can inspect model weights and rerun an analysis. A VP making a $2 million decision based on a churn prediction doesn't have that option. They need to trust the system that produced it.

Each problem feeds the next. Bad data erodes confidence, black-box outputs prevent diagnosis, and shadow tools proliferate to fill the gap. Building AI analytics trust means addressing all three dimensions at once: your technical workflows, how you communicate with stakeholders, and how your organization learns to trust AI over time.

Ground AI analytics in governed data

You can't bolt trust onto a workflow after the fact. It has to be built in from the start, and that means your governance, your monitoring, and your human review processes need to work together, not live in separate tools.

Centralize governance around your data warehouse

The simplest way to make AI-generated insights trustworthy is to ground them in the same governed data your team already maintains. Your AI queries should operate within the same permission and lineage framework as every other form of data access, so when a stakeholder asks "where did this number come from?", you can trace it back to source tables and transformations.

Data governance in the AI era gets more expansive than most teams realize. It goes beyond individual models to cover your data, your prompts, your workflows, your human decision points, and everything downstream. For analytics engineers, this hits especially close to home. AI-generated SQL can create technical debt and shadow metrics if it operates outside your semantic layer. But when AI queries run against the same governed metric definitions your dbt models enforce, you get consistency by default rather than chasing it after the fact.

Teams don't need to build out full semantic models to get started. Endorsing tables, adding warehouse descriptions, and setting workspace rules give agents enough context to produce governed answers from day one. But when answers need to be 100% accurate, semantic models are the most robust path to get there.

In Hex, the Modeling Agent helps platform owners define and maintain those models, so every downstream query, whether written by a person or generated by AI, computes metrics consistently.

Implement continuous validation

One-time audits catch problems that existed when you ran the audit. Production AI workflows need ongoing monitoring, and your data teams and your business stakeholders care about different signals.

On the analytics side, you're watching whether agents are returning accurate, governed answers. Whether they're using the right metric definitions, querying the right tables, and producing results that hold up to scrutiny.

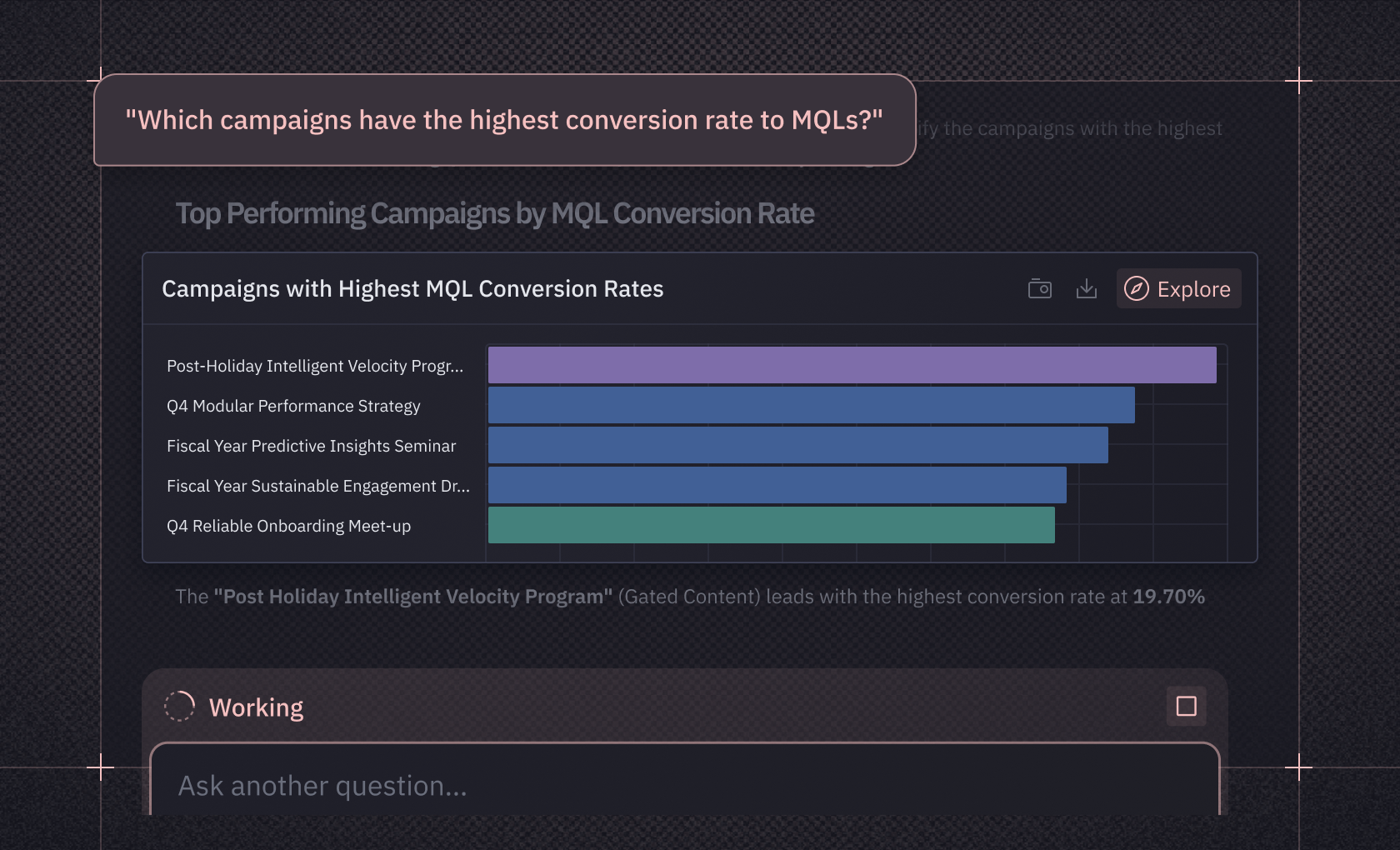

In Hex, Context Studio gives data teams visibility into exactly this: what questions are being asked, how often agents produce quality issues, and which topics are relying on unstructured data rather than governed definitions. That observability turns validation from a periodic audit into a continuous feedback loop. Teams can see where governance gaps exist and prioritize what to model next.

On the business side, you're tracking whether the decisions informed by AI are better than the decisions made without it. Most teams over-index on technical metrics while ignoring whether business outcomes actually improved. Both matter — but if you can only start with one, start with the business outcomes and work backward to the technical causes.

Design circuit breakers for uncertain outputs

AI systems often produce confident-sounding answers even when the underlying context is thin, which makes errors harder to catch than traditional software bugs. You need routing patterns that flag low-confidence outputs for human review before they reach stakeholders. That might mean questions where the agent doesn't have enough governed context to answer well, outputs that rely on ungoverned or undocumented tables, or cases where the question itself is ambiguous enough that multiple valid interpretations exist.

The goal is to apply human judgment where it matters most and let AI handle the rest. Define escalation SLAs just as you would for production incidents: how fast should a flagged output get human review, and who owns that resolution?

Treat escalations as learning opportunities

When too many cases escalate to humans, it negates the efficiency benefits of AI. But when you capture escalations systematically, logging the human resolution alongside the original AI output, the system gets smarter over time. Track escalation rates, resolution patterns, and time-to-resolution alongside your standard model metrics.

Context Studio can help here too. The same observability dashboard that surfaces agent quality issues also shows you which questions consistently need human intervention, giving you a clear signal for where to invest next.

Make AI analytics explainable across audiences

Explainability is an ongoing practice of matching the right level of detail to each audience, not a feature you ship once. And it's one of the highest-leverage ways to increase trust in AI analytics outputs.

Match the explanation to the decision

Your executives and your account managers need different views of the same output. Executives need the strategic picture: "Which factors generally drive customer churn?" Account managers need the individual prediction: "Why did this specific customer get flagged?" Most teams only build one of these views, and adoption stalls with whichever audience gets left out.

The practical question is how you shape what agents surface for each audience. Workspace rules and prompt configuration give data teams control over the level of detail an agent includes in its answers — whether that's a high-level summary with a confidence indicator for a product manager, or the full breakdown of which metric definitions and tables inform the result for an analyst reviewing the work. The goal isn't building separate systems for each audience; it's configuring one system to explain itself at the right depth.

For business stakeholders, feature importance rankings only build trust when they connect to domain knowledge people already have. "Payment history contributed about 35% to this prediction, while customer service interactions contributed roughly 20%" lands because those are concepts a customer success manager already thinks in. Abstract model internals don't. The explanations that build trust are the ones that meet people in their own language.

Be transparent about uncertainty and limitations

Being honest about when your AI is uncertain builds more sustainable trust than claiming perfect accuracy. Show stakeholders when an output falls outside what the system was designed to handle, flag data quality issues that affect reliability, and be upfront about where your governed context has gaps.

This matters for compliance too. Regulations like GDPR include provisions requiring transparency and meaningful information about automated decisions, and sector-specific frameworks are getting more prescriptive. You don't need to turn your analytics workflow into a legal document, but being able to show how a model reached a conclusion, and where it's uncertain, puts you in a much stronger position.

Build progressive disclosure into your interfaces

Not everyone needs the same depth. A product manager asking "what happened to retention last month?" wants a clear answer with a confidence indicator. An analyst reviewing that same output wants to see the SQL, check which tables were queried, and verify the metric definitions match the semantic layer.

In Hex, Threads lets business stakeholders ask questions in plain language and get governed answers, while the underlying SQL, transformations, and data lineage remain one click away for anyone who wants to verify the work.

Nothing is hidden; it's layered. For deeper technical workflows, the Notebook Agent lets data scientists generate, review, and edit code directly, so AI-generated analyses stay inspectable and reproducible rather than disappearing into a black box.

Build organizational trust in AI analytics over time

Technical infrastructure gets you partway. The rest is organizational work: education, communication, and governance that evolves as your AI capabilities mature.

Embed AI into daily workflows, not training sessions

Generic "AI 101" training fails because it's disconnected from daily work. Executives engage when you frame AI around avoiding costly mistakes — not as a new tool to learn, but as a better way to make decisions they're already making. Analysts care about reducing their backlog and stopping the cycle of stakeholders bypassing them for ungoverned tools.

Understanding what each role cares about turns abstract data literacy into something people actually use.

Establish shared standards that people actually follow

Governance that exists only on paper doesn't build trust, and data leaders know this is one of their biggest risks. The governance that works automates metadata tracking so lineage is visible, enforces access controls with clear stewardship roles, vets data quality systematically, and monitors model outcomes for drift and fairness.

The State of Data Teams 2025 report found that 70% of data leaders see self-serve analytics as a worthy goal, but as one senior director put it, "There's always nuance that's hard to control for when talking about self-serve. Both in the data reliability sense but also in the data literacy sense." Standards only work when the tools make compliance the path of least resistance. If governed access is harder to use than ChatGPT, people will keep using ChatGPT.

Showcase trust outcomes, not just success stories

Peer proof is more persuasive than top-down mandates. But the proof that moves other teams is measurable trust outcomes that speak to the problems they're already feeling, not "we built a cool thing."

When ClickUp's data team built a churn app in Hex, they gave business users self-service access with proper guardrails in place. The data team stopped fielding endless ad-hoc requests, delivered more than $1M in churn savings, and proved that governed self-serve could work. Other teams noticed.

At Figma, researcher Rie McGwier used Hex's Notebook Agent to build a product health dashboard program that now serves thousands of employees, refreshing hourly and appearing at leadership offsites. What made this a trust story is what happened next — other teams started building on the same foundation. The data was governed, the methodology was transparent, every transformation was inspectable, and the results were measurable. That visibility is what turns one team's success into organizational confidence.

Track trust directly

Quick feedback loops help you spot adoption barriers early, and ground-level input from the teams using AI tools helps you adapt faster than top-down mandates alone.

Monitor what percentage of key decisions reference AI-informed metrics, whether conflicting metric incidents are trending down, and how stakeholder confidence scores change over time. These aren't vanity metrics. They're the leading indicators that tell you whether your trust-building investment is working before the lagging indicators like adoption and ROI catch up.

What changes when stakeholders trust the data

For data teams, trust-building is the foundation that makes real work possible, not overhead that slows it down. Transparent workflows, explainable outputs, governed metrics, and honest communication about limitations create the conditions where AI analytics can change how your organization decides.

Hex is built with AI at the center of data workflows, with full awareness of your warehouse schema and semantic models — giving data teams the transparency foundation to answer "how confident are we?" with evidence, not hand-waving.

See how your team can build AI analytics trust from the ground up. Request a demo with your own data, or sign up to explore Hex.